The differences between human vision and computer vision and why you need domain randomization

April 24,2023Intro

Most companies believe they can go outside, snap some pictures, and train a robust Computer Vision (CV) model. As the autonomous car companies have shown, that is rarely the case. The reason is that computers learn to identify objects differently than humans.

Humans excel at recognizing familiar objects, faces, and patterns, even when presented in different orientations or with partial information. Our brain's ability to perceive depth and spatial relationships allows us to easily navigate our environment. While computer vision has made significant strides in object and pattern recognition, it often falls short compared to human capabilities, especially in tasks requiring a deep understanding of context or spatial relationships.

I walk my 2-year-old daughter to daycare every day. Along the way, I point out objects we see and identify them - “bicycle,” “bus,” “pumpkin,” “Christmas tree,” etc. It only takes a handful of examples until she catches on and starts to call out the object herself. After another dozen positive confirmations, her human cognitive abilities really shine.

She identifies a Christmas tree 15 stories up in an apartment window that I never even noticed. She points out a bus turning a corner six blocks away. It doesn’t matter if it’s dawn, dusk, rainy, snowy, foggy, or windy (we live in Chicago, so she has experienced it all and some). She can still identify objects with an accuracy that continues to impress me.

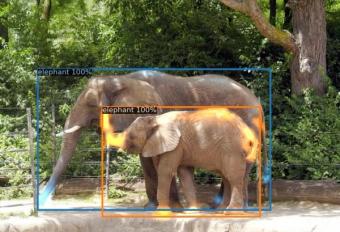

At present, CV models can’t learn the same way as humans. CV models must be exposed to a much greater diversity of examples during training, or they can fall prey to scene-object bias - when a model utilizes background information to infer an object.

Let’s say you are building a CV model to detect yellow school buses. If you go outside and collect a ‘diverse’ set of images, such as the ones below:

In the human mind, that seems like a good variety of angles and perspectives of what a school bus could look like. However, for a CV model, it may think a school bus is ‘a yellow rectangle on a black surface.’

Why do you need Domain Randomization?

The challenge is when the model sees school buses in the following scenarios. It may not be able to identify them correctly. As they aren’t ‘ yellow rectangles on a black surface.’

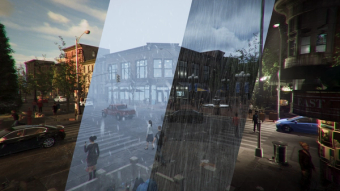

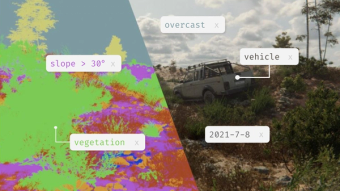

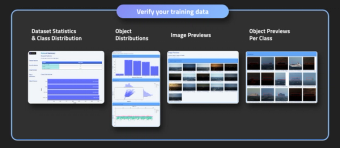

Bifrost solves this by allowing developers to randomize both objects and backgrounds - buses can be different colors and appear on various surfaces in different lighting and weather conditions.

This is called domain randomization - which is critical to building robust computer vision models. It mitigates bias and ensures that the model learns what an object looks like regardless of context.

Want to learn more about domain randomization and other techniques to build better computer vision models? Reach out at hello@bifrost.ai or here!